The function irace implements the Iterated Racing procedure for parameter

tuning. It receives a configuration scenario and a parameter space to be

tuned, and returns the best configurations found, namely, the elite

configurations obtained from the last iterations. As a first step, it checks

the correctness of scenario using checkScenario() and recovers a

previous execution if scenario$recoveryFile is set. A R data file log of

the execution is created in scenario$logFile.

Arguments

- scenario

list()

Data structure containing irace settings. The data structure has to be the one returned by the functiondefaultScenario()orreadScenario().

Value

(data.frame)

A data frame with the set of best algorithm configurations found by irace. The data frame has the following columns:

.ID.: Internal id of the candidate configuration.Parameter names: One column per parameter name inparameters..PARENT.: Internal id of the parent candidate configuration.

Additionally, this function saves an R data file containing an object called

iraceResults. The path of the file is indicated in scenario$logFile.

The iraceResults object is a list with the following structure:

scenarioThe scenario R object containing the irace options used for the execution. See

defaultScenariofor more information. The elementscenario$parameterscontains the parameters R object that describes the target algorithm parameters. SeereadParameters.allConfigurationsThe target algorithm configurations generated by irace. This object is a data frame, each row is a candidate configuration, the first column (

.ID.) indicates the internal identifier of the configuration, the following columns correspond to the parameter values, each column named as the parameter name specified in the parameter object. The final column (.PARENT.) is the identifier of the configuration from which model the actual configuration was sampled.allElitesA list that contains one element per iteration, each element contains the internal identifier of the elite candidate configurations of the corresponding iteration (identifiers correspond to

allConfigurations$.ID.).iterationElitesA vector containing the best candidate configuration internal identifier of each iteration. The best configuration found corresponds to the last one of this vector.

experimentsA matrix with configurations as columns and instances as rows. Column names correspond to the internal identifier of the configuration (

allConfigurations$.ID.).experimen_logA

data.tablewith columnsiteration,instance,configuration,time. This matrix contains the log of all the experiments that irace performs during its execution. The instance column refers to the index of therace_state$instances_logdata frame. Time is saved ONLY when reported by thetargetRunner.softRestartA logical vector that indicates if a soft restart was performed on each iteration. If

FALSE, then no soft restart was performed.stateAn environment that contains the state of irace, the recovery is done using the information contained in this object.

testingA list that contains the testing results. The elements of this list are:

experimentsa matrix with the testing experiments of the selected configurations in the same format as the explained above andseedsa vector with the seeds used to execute each experiment.

Details

The execution of this function is reproducible under some conditions. See the FAQ section in the User Guide.

See also

irace_main()a higher-level interface to

irace().irace_cmdline()a command-line interface to

irace().readScenario()for reading a configuration scenario from a file.

readParameters()read the target algorithm parameters from a file.

defaultScenario()returns the default scenario settings of irace.

checkScenario()to check that the scenario is valid.

Examples

if (FALSE) { # \dontrun{

# In general, there are three steps:

scenario <- readScenario(filename = "scenario.txt")

irace(scenario = scenario)

} # }

#######################################################################

# This example illustrates how to tune the parameters of the simulated

# annealing algorithm (SANN) provided by the optim() function in the

# R base package. The goal in this example is to optimize instances of

# the following family:

# f(x) = lambda * f_rastrigin(x) + (1 - lambda) * f_rosenbrock(x)

# where lambda follows a normal distribution whose mean is 0.9 and

# standard deviation is 0.02. f_rastrigin and f_rosenbrock are the

# well-known Rastrigin and Rosenbrock benchmark functions (taken from

# the cmaes package). In this scenario, different instances are given

# by different values of lambda.

#######################################################################

## First we provide an implementation of the functions to be optimized:

f_rosenbrock <- function (x) {

d <- length(x)

z <- x + 1

hz <- z[1L:(d - 1L)]

tz <- z[2L:d]

sum(100 * (hz^2 - tz)^2 + (hz - 1)^2)

}

f_rastrigin <- function (x) {

sum(x * x - 10 * cos(2 * pi * x) + 10)

}

## We generate 20 instances (in this case, weights):

weights <- rnorm(20, mean = 0.9, sd = 0.02)

## On this set of instances, we are interested in optimizing two

## parameters of the SANN algorithm: tmax and temp. We setup the

## parameter space as follows:

parameters_table <- '

tmax "" i,log (1, 5000)

temp "" r (0, 100)

'

## We use the irace function readParameters to read this table:

parameters <- readParameters(text = parameters_table)

## Next, we define the function that will evaluate each candidate

## configuration on a single instance. For simplicity, we restrict to

## three-dimensional functions and we set the maximum number of

## iterations of SANN to 1000.

target_runner <- function(experiment, scenario)

{

instance <- experiment$instance

configuration <- experiment$configuration

D <- 3

par <- runif(D, min=-1, max=1)

fn <- function(x) {

weight <- instance

return(weight * f_rastrigin(x) + (1 - weight) * f_rosenbrock(x))

}

# For reproducible results, we should use the random seed given by

# experiment$seed to set the random seed of the target algorithm.

res <- withr::with_seed(experiment$seed,

stats::optim(par,fn, method="SANN",

control=list(maxit=1000

, tmax = as.numeric(configuration[["tmax"]])

, temp = as.numeric(configuration[["temp"]])

)))

## This list may also contain:

## - 'time' if irace is called with 'maxTime'

## - 'error' is a string used to report an error

## - 'outputRaw' is a string used to report the raw output of calls to

## an external program or function.

## - 'call' is a string used to report how target_runner called the

## external program or function.

return(list(cost = res$value))

}

## We define a configuration scenario by setting targetRunner to the

## function define above, instances to the first 10 random weights, and

## a maximum budget of 'maxExperiments' calls to targetRunner.

scenario <- list(targetRunner = target_runner,

instances = weights[1:10],

maxExperiments = 500,

# Do not create a logFile

logFile = "",

parameters = parameters)

## We check that the scenario is valid. This will also try to execute

## target_runner.

checkIraceScenario(scenario)

#> # 2026-05-14 19:30:15 UTC: Checking scenario

#> ## irace scenario:

#> scenarioFile = "./scenario.txt"

#> execDir = "/home/runner/work/irace/irace/docs/reference"

#> parameterFile = "/home/runner/work/irace/irace/docs/reference/parameters.txt"

#> parameters = <environment>

#> initConfigurations = NULL

#> configurationsFile = ""

#> logFile = ""

#> recoveryFile = ""

#> instances = c(0.871999129665565, 0.905106341096905, 0.851254727775609, 0.899888574265077, 0.912431054428304, 0.922968232120521, 0.863563646780467, 0.89505349395853, 0.895116007864432, 0.894345891023711)

#> trainInstancesDir = ""

#> trainInstancesFile = ""

#> sampleInstances = TRUE

#> testInstancesDir = ""

#> testInstancesFile = ""

#> testInstances = NULL

#> testNbElites = 1L

#> testIterationElites = FALSE

#> testType = "friedman"

#> firstTest = 5L

#> blockSize = 1L

#> eachTest = 1L

#> targetRunner = function (experiment, scenario) { instance <- experiment$instance configuration <- experiment$configuration D <- 3 par <- runif(D, min = -1, max = 1) fn <- function(x) { weight <- instance return(weight * f_rastrigin(x) + (1 - weight) * f_rosenbrock(x)) } res <- withr::with_seed(experiment$seed, stats::optim(par, fn, method = "SANN", control = list(maxit = 1000, tmax = as.numeric(configuration[["tmax"]]), temp = as.numeric(configuration[["temp"]])))) return(list(cost = res$value))}

#> targetRunnerLauncher = ""

#> targetCmdline = "{configurationID} {instanceID} {seed} {instance} {bound} {targetRunnerArgs}"

#> targetRunnerRetries = 0L

#> targetRunnerTimeout = 0L

#> targetRunnerData = ""

#> targetRunnerParallel = NULL

#> targetEvaluator = NULL

#> deterministic = FALSE

#> maxExperiments = 500L

#> minExperiments = NA_character_

#> maxTime = 0L

#> budgetEstimation = 0.05

#> minMeasurableTime = 0.01

#> parallel = 0L

#> loadBalancing = TRUE

#> mpi = FALSE

#> batchmode = "0"

#> quiet = FALSE

#> debugLevel = 2L

#> seed = NA_character_

#> softRestart = TRUE

#> softRestartThreshold = 1e-04

#> elitist = TRUE

#> elitistNewInstances = 1L

#> elitistLimit = 2L

#> repairConfiguration = NULL

#> capping = FALSE

#> cappingAfterFirstTest = FALSE

#> cappingType = "median"

#> boundType = "candidate"

#> boundMax = NULL

#> boundDigits = 0L

#> boundPar = 1L

#> boundAsTimeout = TRUE

#> postselection = TRUE

#> aclib = FALSE

#> nbIterations = 0L

#> nbExperimentsPerIteration = 0L

#> minNbSurvival = 0L

#> nbConfigurations = 0L

#> mu = 5L

#> confidence = 0.95

#> ## end of irace scenario

#> # 2026-05-14 19:30:15 UTC: Checking target runner.

#> # 2026-05-14 19:30:15 UTC: Executing targetRunner (2 times)...

#> # targetRunner returned:

#> [[1]]

#> [[1]]$cost

#> [1] 6.44076726938045

#>

#>

#> [[2]]

#> [[2]]$cost

#> [1] 13.4983596075698

#>

#>

#> # 2026-05-14 19:30:16 UTC: Check successful.

#> [1] TRUE

# \donttest{

## We are now ready to launch irace. We do it by means of the irace

## function. The function will print information about its

## progress. This may require a few minutes, so it is not run by default.

tuned_confs <- irace(scenario = scenario)

#> # 2026-05-14 19:30:16 UTC: Initialization

#> # Elitist race

#> # Elitist new instances: 1

#> # Elitist limit: 2

#> # nbIterations: 3

#> # minNbSurvival: 3

#> # nbParameters: 2

#> # seed: 776540750

#> # confidence level: 0.95

#> # budget: 500

#> # mu: 5

#> # deterministic: FALSE

#>

#> # 2026-05-14 19:30:16 UTC: Iteration 1 of 3

#> # experimentsUsed: 0

#> # remainingBudget: 500

#> # currentBudget: 166

#> # nbConfigurations: 27

#> # Markers:

#> x No test is performed.

#> c Configurations are discarded only due to capping.

#> - The test is performed and some configurations are discarded.

#> = The test is performed but no configuration is discarded.

#> ! The test is performed and configurations could be discarded but elite configurations are preserved.

#> . Alive configurations were already evaluated on this instance and nothing is discarded.

#> : All alive configurations are elite, but some need to be evaluated on this instance.

#>

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> | | Instance| Alive| Best| Mean best| Exp so far| W time| rho|KenW| Qvar|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> |x| 1| 27| 13| 0.1545836763| 27|00:00:00| NA| NA| NA|

#> |x| 2| 27| 12| 0.2124022719| 54|00:00:00|+0.42|0.71|0.5968|

#> |x| 3| 27| 1| 0.3064025767| 81|00:00:00|+0.29|0.53|0.7719|

#> |x| 4| 27| 1| 0.3305203724| 108|00:00:00|+0.30|0.48|0.7510|

#> |-| 5| 12| 1| 0.3111555450| 135|00:00:00|-0.06|0.15|1.0198|

#> |=| 6| 12| 12| 1.020941793| 147|00:00:00|+0.03|0.19|0.9575|

#> |=| 7| 12| 1| 1.123570759| 159|00:00:00|+0.03|0.17|0.9332|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> Best-so-far configuration: 1 mean value: 1.123570759

#> Description of the best-so-far configuration:

#> .ID. tmax temp .PARENT.

#> 1 1 18 27.2408 NA

#>

#> # 2026-05-14 19:30:17 UTC: Elite configurations (first number is the configuration ID; listed from best to worst according to the sum of ranks):

#> tmax temp

#> 1 18 27.2408

#> 12 10 70.9908

#> 17 1 99.1158

#> # 2026-05-14 19:30:17 UTC: Iteration 2 of 3

#> # experimentsUsed: 159

#> # remainingBudget: 341

#> # currentBudget: 170

#> # nbConfigurations: 23

#> # Markers:

#> x No test is performed.

#> c Configurations are discarded only due to capping.

#> - The test is performed and some configurations are discarded.

#> = The test is performed but no configuration is discarded.

#> ! The test is performed and configurations could be discarded but elite configurations are preserved.

#> . Alive configurations were already evaluated on this instance and nothing is discarded.

#> : All alive configurations are elite, but some need to be evaluated on this instance.

#>

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> | | Instance| Alive| Best| Mean best| Exp so far| W time| rho|KenW| Qvar|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> |x| 8| 23| 40| 0.06933980712| 23|00:00:00| NA| NA| NA|

#> |x| 5| 23| 41| 0.4561997342| 43|00:00:00|+0.21|0.60|0.9330|

#> |x| 7| 23| 46| 0.2667608730| 63|00:00:00|+0.04|0.36|0.9758|

#> |x| 6| 23| 46| 1.394460175| 83|00:00:00|+0.07|0.30|0.8598|

#> |=| 2| 23| 46| 1.175140175| 103|00:00:00|+0.03|0.22|0.9236|

#> |=| 1| 23| 46| 0.9859970110| 123|00:00:00|+0.10|0.25|0.9161|

#> |=| 4| 23| 12| 1.048850627| 143|00:00:00|+0.05|0.19|0.9548|

#> |=| 3| 23| 1| 1.146602598| 163|00:00:00|+0.03|0.16|0.9637|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> Best-so-far configuration: 1 mean value: 1.146602598

#> Description of the best-so-far configuration:

#> .ID. tmax temp .PARENT.

#> 1 1 18 27.2408 NA

#>

#> # 2026-05-14 19:30:18 UTC: Elite configurations (first number is the configuration ID; listed from best to worst according to the sum of ranks):

#> tmax temp

#> 1 18 27.2408

#> 46 6 29.2441

#> 12 10 70.9908

#> # 2026-05-14 19:30:18 UTC: Iteration 3 of 3

#> # experimentsUsed: 322

#> # remainingBudget: 178

#> # currentBudget: 178

#> # nbConfigurations: 22

#> # Markers:

#> x No test is performed.

#> c Configurations are discarded only due to capping.

#> - The test is performed and some configurations are discarded.

#> = The test is performed but no configuration is discarded.

#> ! The test is performed and configurations could be discarded but elite configurations are preserved.

#> . Alive configurations were already evaluated on this instance and nothing is discarded.

#> : All alive configurations are elite, but some need to be evaluated on this instance.

#>

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> | | Instance| Alive| Best| Mean best| Exp so far| W time| rho|KenW| Qvar|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> |x| 9| 22| 62| 0.06915026542| 22|00:00:00| NA| NA| NA|

#> |x| 8| 22| 52| 0.1281465823| 41|00:00:00|+0.27|0.63|0.8515|

#> |x| 5| 22| 62| 1.061445389| 60|00:00:00|-0.00|0.33|1.0462|

#> |x| 4| 22| 62| 0.9178033809| 79|00:00:00|+0.05|0.28|1.0095|

#> |=| 6| 22| 62| 0.7745033012| 98|00:00:00|+0.05|0.24|0.9954|

#> |=| 3| 22| 61| 0.7662212359| 117|00:00:00|+0.03|0.20|0.9407|

#> |=| 7| 22| 62| 1.411663631| 136|00:00:00|+0.03|0.17|0.9337|

#> |=| 1| 22| 62| 1.283452154| 155|00:00:00|+0.06|0.18|0.9015|

#> |-| 2| 15| 1| 1.055319414| 174|00:00:00|-0.05|0.07|0.9792|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> Best configuration for the instances in this race: 1

#> Best-so-far configuration: 46 mean value: 1.348247381

#> Description of the best-so-far configuration:

#> .ID. tmax temp .PARENT.

#> 46 46 6 29.2441 1

#>

#> # 2026-05-14 19:30:19 UTC: Elite configurations (first number is the configuration ID; listed from best to worst according to the sum of ranks):

#> tmax temp

#> 46 6 29.2441

#> 1 18 27.2408

#> 12 10 70.9908

#> # 2026-05-14 19:30:19 UTC: Stopped because there is not enough budget left to race more than the minimum (3).

#> # You may either increase the budget or set 'minNbSurvival' to a lower value.

#> # Iteration: 4

#> # nbIterations: 4

#> # experimentsUsed: 496

#> # timeUsed: 0

#> # remainingBudget: 4

#> # currentBudget: 4

#> # number of elites: 3

#> # nbConfigurations: 3

#> # Total CPU user time: 3.591, CPU sys time: 0.01, Wall-clock time: 3.602

#> # 2026-05-14 19:30:19 UTC: Starting post-selection:

#> # Configurations selected: 46, 1, 12, 48.

#> # Pending instances: 0, 0, 0, 0.

#> # 2026-05-14 19:30:19 UTC: seed: 776540750

#> # Configurations: 4

#> # Available experiments: 4

#> # minSurvival: 1

#> # Markers:

#> x No test is performed.

#> c Configurations are discarded only due to capping.

#> - The test is performed and some configurations are discarded.

#> = The test is performed but no configuration is discarded.

#> ! The test is performed and configurations could be discarded but elite configurations are preserved.

#> . Alive configurations were already evaluated on this instance and nothing is discarded.

#> : All alive configurations are elite, but some need to be evaluated on this instance.

#>

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> | | Instance| Alive| Best| Mean best| Exp so far| W time| rho|KenW| Qvar|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> |.| 7| 4| 46| 0.1476415099| 0|00:00:00| NA| NA| NA|

#> |.| 5| 4| 46| 0.1972832785| 0|00:00:00|+0.00|0.50|1.1088|

#> |.| 2| 4| 46| 0.2308089107| 0|00:00:00|-0.13|0.24|0.8033|

#> |.| 9| 4| 46| 0.9347023005| 0|00:00:00|+0.10|0.33|0.6936|

#> |.| 8| 4| 46| 0.8289050528| 0|00:00:00|+0.08|0.26|0.6133|

#> |.| 6| 4| 46| 1.487013891| 0|00:00:00|-0.11|0.08|0.7762|

#> |.| 3| 4| 46| 1.280905894| 0|00:00:00|-0.09|0.07|0.7853|

#> |.| 1| 4| 46| 1.125827806| 0|00:00:00|-0.02|0.11|0.7271|

#> |.| 4| 4| 46| 1.348247381| 0|00:00:00|+0.00|0.11|0.6897|

#> |=| 10| 4| 1| 0.9601822421| 4|00:00:00|-0.03|0.08|0.7222|

#> +-+-----------+-----------+-----------+----------------+-----------+--------+-----+----+------+

#> Best-so-far configuration: 1 mean value: 0.9601822421

#> Description of the best-so-far configuration:

#> .ID. tmax temp .PARENT.

#> 1 1 18 27.2408 NA

#>

#> # 2026-05-14 19:30:19 UTC: Elite configurations (first number is the configuration ID; listed from best to worst according to the sum of ranks):

#> tmax temp

#> 1 18 27.2408

#> 46 6 29.2441

#> 12 10 70.9908

#> # Total CPU user time: 3.646, CPU sys time: 0.013, Wall-clock time: 3.66

## We can print the best configurations found by irace as follows:

configurations_print(tuned_confs)

#> tmax temp

#> 1 18 27.2408

#> 46 6 29.2441

#> 12 10 70.9908

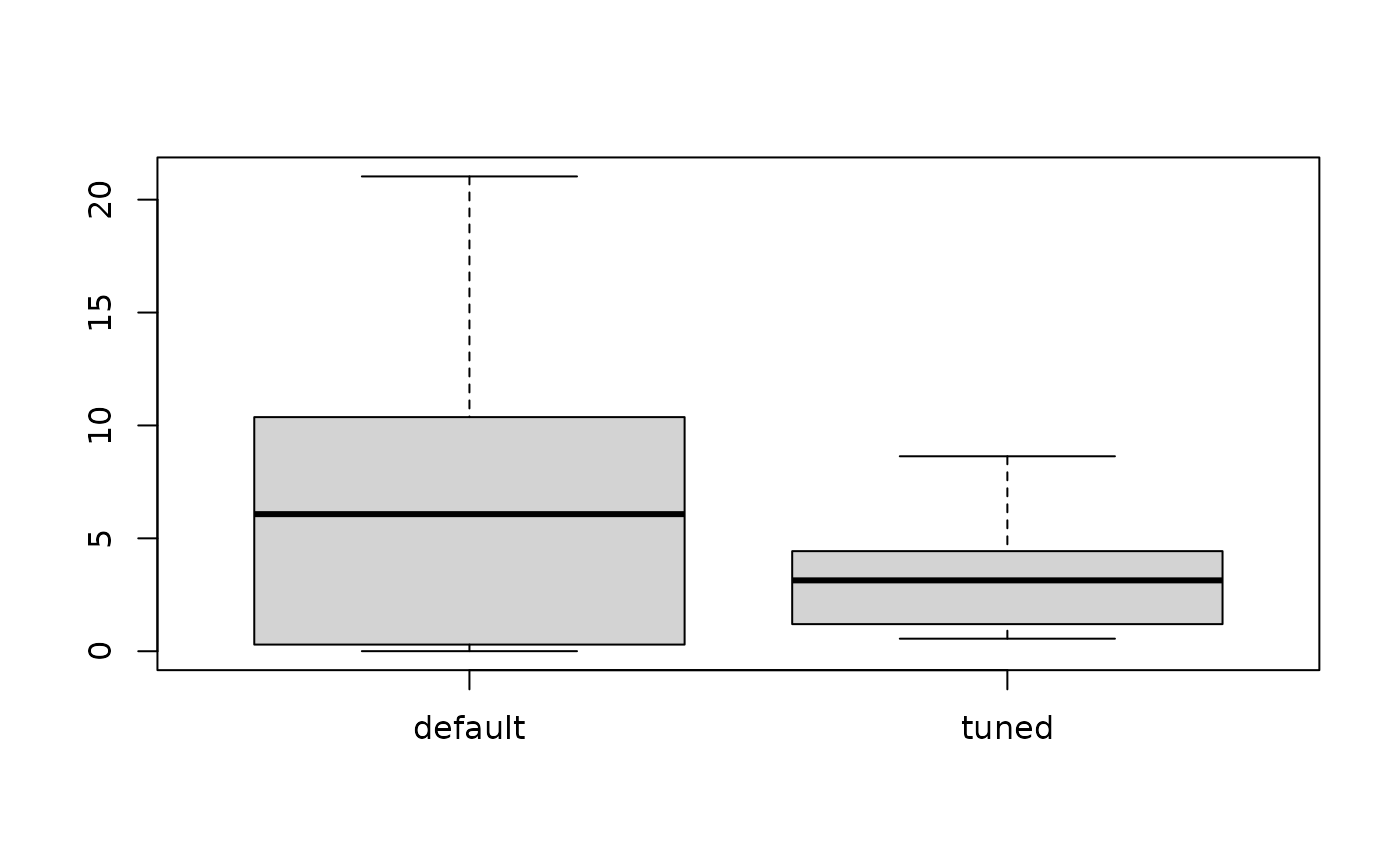

## We can evaluate the quality of the best configuration found by

## irace versus the default configuration of the SANN algorithm on

## the other 10 instances previously generated.

test_index <- 11:20

test_seeds <- sample.int(2147483647L, size = length(test_index), replace = TRUE)

test <- function(configuration)

{

res <- lapply(seq_along(test_index),

function(x) target_runner(

experiment = list(instance = weights[test_index[x]],

seed = test_seeds[x],

configuration = configuration),

scenario = scenario))

return (sapply(res, getElement, name = "cost"))

}

## To do so, first we apply the default configuration of the SANN

## algorithm to these instances:

default <- test(data.frame(tmax=10, temp=10))

## We extract and apply the winning configuration found by irace

## to these instances:

tuned <- test(removeConfigurationsMetaData(tuned_confs[1,]))

## Finally, we can compare using a boxplot the quality obtained with the

## default parametrization of SANN and the quality obtained with the

## best configuration found by irace.

boxplot(list(default = default, tuned = tuned))

# }

# }